Stop Shipping LLM Agents Without a Safety Net: Using MLflow's ResponsesAgent Interface

Here's a pattern I keep seeing: someone builds a genuinely useful LLM agent, it works great, gets deployed, and then six months later nobody knows what version is running, how to update it safely, ...

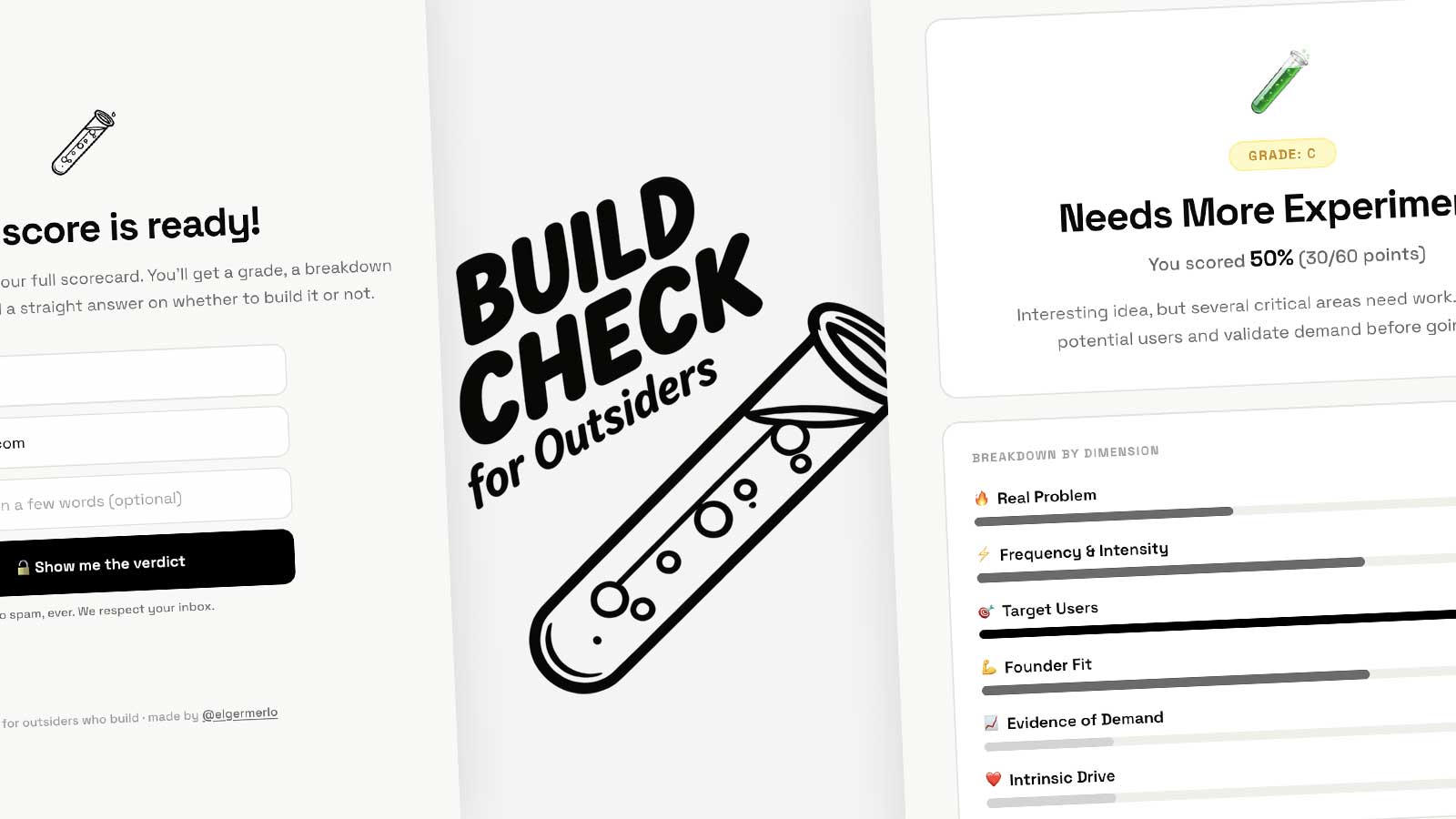

Source: DEV Community

Here's a pattern I keep seeing: someone builds a genuinely useful LLM agent, it works great, gets deployed, and then six months later nobody knows what version is running, how to update it safely, or what changed between the version that worked and the one that doesn't. The capability was never the hard part. MLflow 3.6 introduced the ResponsesAgent interface, and it's worth your attention if you're building agents for anything more serious than a weekend project. Two methods to implement (load_context and predict), an OpenAI-compatible request/response schema, and automatic integration with MLflow's tracking, versioning, and deployment infrastructure. The part I like: it doesn't care what's inside. LangChain, DSPy, custom Python — you keep your internals, you just standardize the outer contract. Tool call tracking and token usage logging come along for free. Full walkthrough here, including how to structure the code and wire it into Databricks: 👉 https://pattersonconsultingtn.com/blo